Select Sidearea

Populate the sidearea with useful widgets. It’s simple to add images, categories, latest post, social media icon links, tag clouds, and more.

hello@youremail.com

+1234567890

+1234567890

Populate the sidearea with useful widgets. It’s simple to add images, categories, latest post, social media icon links, tag clouds, and more.

Iztok Franko

We are in the middle of our 2020 Airline Conversion Optimization research, so I can’t think of a better time to talk about your conversion optimization process.

While we see more and more airlines establishing conversion optimization teams and programs, there are still many of you that are not there yet. During my conversion rate optimization (CRO) research, I talk to many airline digital marketing and optimization professionals like you. What I often hear is this:

Iztok, we want to get started with our conversion optimization process, but we don’t have the resources.

Right after resources and budget constraints, the second reason why airlines don’t start doing CRO and experimentation is:

We don’t have understanding and support from our management.

I’ve written about how to put your conversion optimization process on your company’s agenda in the past. We also talked about how to build a culture of experimentation in your airline environment.

However, this is easier said than done. The conversion optimization process and culture do not get started by themselves.

What I see is that in many cases, conversion optimization and experimentation starts with one enthusiast, one person who is really excited about experimentation. Usually this person then becomes a conversion optimization process leader, the internal champion of the CRO program. If you want to become a CRO leader, you need to be curious, have an entrepreneurial mindset, and be passionate about digital and marketing.

But more than anything, if you want to establish CRO and experimentation, you need to be good at explaining its value and educating the key stakeholders in your company. You need to know and be able to talk to your “internal customers” even better than you do with your real customers, your users.

In today’s episode of the Diggintravel Podcast, you can hear from Shiva Manjunath, digital marketing manager at Gartner, who is a huge CRO and experimentation enthusiast.

Listen to him talk about key parts for a great conversion optimization process via the audio player below. You can also read on for the interview recap and the full interview transcript below.

And don’t forget to subscribe to the Diggintravel Podcast in your preferred podcast app to stay on top of the 2020 airline digital trends!

Iztok Franko: Hi, this is Iztok, and we are talking today with Shiva. Shiva, welcome to the Diggintravel Podcast. How is life in Texas?

Shiva: Oh man, it is a lot colder than I thought it would be. It’s like 37 degrees outside right now. But loving it down here in Austin, Texas.

Iztok: This is the new reality of talking to people from around the globe. People from Texas complaining that it’s too cold, while here in Europe it’s too warm.

Shiva: I’m actually from Connecticut, so you would think I’m used to the cold and used to the snow, but I will never get used to the cold. [laughs]

Iztok: Okay, good. Before we start, just a warning to our listeners. We are recording this on Saturday evening, and we both have dogs, so don’t be surprised if there is some unplanned barking happening. [laughs]

Shiva: Yeah, my dog might disagree with some of the things that I say, and he might be vocal about it. [laughs]

Iztok: I’m a huge dog lover, so all good here. So let’s start seriously. Shiva, why I reached out to you is I see you are a big conversion optimization enthusiast, and you really like talking and engaging about CRO, A/B test experimentation. Maybe before we start digging deep into it, how did you end up in experimentation and CRO?

Shiva: I have a weird, crazy story. I originally went to college for physiology and neurobiology, which is a mouthful. I grew up in a family that is veterinarians and surrounded by medicine, so I thought I really wanted to go into medicine. That was my career trajectory. I went to college for that.

As soon as I was out of college did my Bachelor’s, I kind of had a life-changing moment where I was like, “I don’t think I want to do this for the rest of my life,” and I had to make a career shift. One of the things I thought I was really passionate about, honestly, was just marketing and technology. I did a lot of technology stuff on the side. So I thought, “Why don’t I jump into technology and marketing? It’s an interesting field, it’s booming, and it’s been growing ever since, so let me give that a shot.”

I applied for a startup, which was a digital marketing startup, and I worked there and grinded for about 3 years. It fascinated me. Every day I would come in early and I’d look at the clock and be like, “All right, it’s 8:00, get my coffee,” and then look at the clock again and it was like, “Oh my God, it’s 6:00. Where did this day go?”

But just loving it, loving digital marketing, doing testing and all that stuff. It was just super fascinating to me. I kind of lucked into that, honestly. It wasn’t something that I had necessarily planned, but man, it’s a fascinating field for me, and I love everything about testing.

Iztok: Very interesting. How did you start learning, or how did you learn from general digital marketing to more detail about CRO and experimentation?

Shiva: Honestly, I worked very closely with someone who had been in this space, the CEO from the first company. He’s a very, very smart person. He was my mentor for 3 years. Worked very closely in terms of learning testing from him, learning about digital marketing principles and things like that.

But the fascinating thing about CRO is when I got into it, it was still nascent. Even if you want to consider nowadays, there’s a lot of companies who still don’t do CRO, so we’re still learning as we go and figuring out the best ways to do CRO. There’s a lot of peers in the org in CRO, and LinkedIn, and just connecting with a bunch of people to talk about it. The CRO community is a very close-knit one – very welcoming, but close-knit. You can share ideas, posts, and people will give you feedback, positive or negative. We’re all just doing this together and learning it together.

So that’s the community part of it, but the personal part of it, I just learned coding on the side, on my own. I learned statistics on the side, on my own. There was a lot of passion for me in testing, so it was actually pretty easy for me to pick up those skills along the way because I just love doing it.

Iztok: One interesting thing that you said – and I also see it in the research area that we do, where we research in detail how airlines do conversion optimization – you said that there are still many companies that don’t do CRO. This is what I see also in our field. I see some companies that are very developed, that have quite advanced testing of UX problems, while others are still trying to figure it out, trying to even understand what it is.

I saw that you were in the past establishing different CRO programs in organizations. What do you see as the key first step how to start, how to even expose conversion optimization in a new organization that is new to it?

Shiva: That’s a really good question. One of the things is as a baseline, the culture has to just be receptive to testing, honestly. If you have an organization largely driven by HiPPO – which is “highest paid person’s opinion,” something like that – if you have that kind of organization, it’s going to be a very, very difficult, uphill battle.

If you’re part of an organization that is data-driven and is open to – and not only open to, but will refuse to make decisions unless there is some kind of data to support that this is in fact the right way to do it, then that’s honestly a first step. That’s a very difficult thing. I’ve been part of organizations where you never really get to the point of the switch flipping that you have to have data-driven decisions.

I’ve been part of organizations where people will look at tests and we’ll say, “Hey, this is a winning test,” and they’ll be like, “Eh, I don’t really like it. I don’t want to do that.” Sometimes you’ll just never be able to convince certain people with data, and that kind of stinks.

But I’ve been thankful to be part of a lot of organizations that have been largely data-driven. It’s really awesome that even in the current state, a lot of organizations now are making that big shift and totally overhauling culture to not just be data-driven, but obsessed with data to the point that it’s not just one data point making decisions; you’re getting data inputs from a bunch of different function teams.

So everyone’s working together to work on this shared story of data-driven as a whole. Experimentation is just one part of that. Obviously a big part of that. But it’s just good to see that finally people are taking that step to be more data-driven as a whole.

Iztok: So you’re saying we are already working with data, we are an organization that values data, so testing is just the next step. It’s like an additional next step in the right direction, where we will validate our things that we do, our decisions, our product changes, new products. We will evaluate it with our experimentation program.

Shiva: Yeah, exactly right. Experimentation is a very awesome way to get data that you want to validate doing things on the website. And it’s not just things like design. I’ve run experiments where we’ve tested marketing principles on our website, and once we tested them, we realized, “Hey, this marketing principle works a lot better than this one. Let’s go ahead and move with this one.” Then you communicate that back to the marketing team, and that changes their whole org too.

So it’s just a data point in terms of getting you a data point for feeding into overall data. But you have other things, like analytics. You have data scientists looking deeply into analytics data to get you the right data. Maybe you’re doing user research; that gets you data. Experimentation is just another data point feeding into your overall data collection, so to speak.

Iztok: One thing that you mentioned initially – you were talking about HiPPOs, about not a culture where different opinions are valued and are evaluated and are open to testing, but where it’s more like “let’s do it like this because a boss or a leader says it.”

It’s a challenge to start with experimentation in such an environment, but sometimes it’s also a challenge when you run experiments because people might interfere because they find it interesting, and then they won’t let you do it properly, like to evaluate it properly – like you mentioned statistics; to find statistically significant results, how to interpret the results. Do you have any experience with this part, how to manage that?

Shiva: Yeah, for sure. It can sometimes be a challenge to explain to certain people when they have preconceived notions about how testing and CRO works. A lot of people have these preconceived notions, and they don’t really have any other data points to go off of, so a large part of it honestly is just education. Sitting them down and saying, “Look, this is how the statistics work. This is how you have to interpret the data.”

One example in particular – this is by far my personal favorite anecdote – one of the companies I started with, when I first got to the company, I was in charge of running experimentation for them. Their process in terms of experimentation was literally running a test for two days, looking at the results, seeing which one was positive, and then running that and then moving on. Obviously, I’m sure a bunch of CRO-ers are cringing at the fact that decisions were being made two days after a test is run.

The challenge there was the senior leadership didn’t quite have a firm grasp of the statistics behind A/B testing. They just saw a positive lift and they’re like, “Great, why wouldn’t we do this? This is winning. Let’s go and then move on.” But the reality is, as most CRO-ers know, letting a test run for two days is not good, usually. It’s usually quite bad because there’s a lot of fluctuation that goes into those tests early on.

In that particular example where I started with that organization, I didn’t necessarily have the repertoire immediately to push back, so I had to figure out a way that I could staple the point home that this is just really bad, you guys are making the wrong decisions.

What I did was, in a super snarky way, the next time I was told to run a test after that first time I saw it happen and go from start to finish, they wanted to run it a certain way. I said, “Okay, I’ll do it that way,” but I literally ran it as an A/A test, not an A/B.

Iztok: Just for our listeners, by A/A, you mean basically you split traffic, A/A, and tested basically two same things at once.

Shiva: I literally made no changes on the website, exactly right.

Iztok: [laughs] Good.

Shiva: Version A made no changes because it was the control, and Version B made no changes because it was the control. So literally there were no differences to the website. I let that test run for two days. I saw a 20% uptick in CVR. The executives saw a 20% uptick in CVR and they’re like, “Okay, great, let’s push this up to 100%.” I was like, “Wait, wait, hold on. Are you sure you want to do this?” They laughed at me like, “Yeah, it’s 20% lift. Why wouldn’t we do that?” I was like, “This is an A/A test.” They got the curious looks like “I don’t know what that is.”

I said, “I haven’t made any changes on A versus B.” You could slowly see the gears starting to turn. They’re like, “Oh, wait. What?” I’m like, “Yeah, this is just really bad statistical analysis. You have to let this test run for a specific sample size that we have to predetermine and then see, based on those lifts. There are statistics behind it. You can’t just let a test run for two days and interpret those results, because that’s just an incorrect interpretation. It’s just flat out wrong.”

So I finally got that point. I said, let’s let this test run for – I think it was about two weeks or two and a half weeks or something like that that was the sample size. I said, “Let’s let it run for two and a half weeks. Let me prove my point.” They’re like, “Okay, we got it.” So I let the test run for two and a half weeks, and then we saw that the results actually did finally normalize out to basically being a non-statistically significant conversion rate lift of some miniscule amount. Then they’re like, “Oh, okay, I think we finally get it.”

That was a really snarky way that I probably shouldn’t have done as my second test there, but it paid off. Since that point, they have not peaked after that. That was a little bit of a trust-building moment. But yeah, they totally understood the principle and they’re like, “Okay, got it. We have to let the test actually run its duration.”

Iztok: I think it’s a great example, a great story. Also, in my experience, we just ran one case we’re discussing with the team – you mentioned one really important role as the leader of a CRO program, which is not only to educate why you need testing, but a lot of people get excited about testing, and then you need to also educate how to do them and how to interpret results.

As I said, one other case that I saw was not with statistical significance, but basically the team was interpreting results without segmenting. Basically they were saying, “Okay, this doesn’t work,” but then when we drilled out into more specific segments, we said, “Okay guys, maybe you saw the results are working really fine for one set of our audience which is receptive to this message, while the other part of the audience” – in this case, it was existing customers versus new people who are new to the brand – “this test and this message runs completely different.”

I think it’s a similar thing when wrong interpretation of the results and the test gets done, and the test gets overruled, while I think with more knowledge and with more caution to interpret results, you can get a different interpretation, maybe the right interpretation. Do you have any similar experience?

Shiva: Oh, totally. That’s a great example of new users versus returning users. That’s a great segment to look at. One of the ones that I think is commonly overlooked is desktop versus mobile, honestly.

That was one thing where another company that I had just started recently with – they had just ended a test from the previous CRO program manager who had joined. I was just peeking through his old results and looking at them, and he ran quite a few interesting tests, but he had called it saying, “No statistically significant lift, let’s move on.”

But when you actually drill down into the results and you look at desktop versus mobile, you’re like, wait a minute. This was a terrible test on mobile, and this was a really good test on desktop. It was a very simple, easy thing to think about, but then when you read into it – so whatever the principle that was being tested – I don’t remember off the top of my head, honestly – but whatever the principle that was being tested, it was a principle that resonated very well on desktop and didn’t on mobile.

As we all know, there are very different behaviors when it comes to desktop versus mobile, so that’s another one that’s a common overlooked segment that people just run a test in aggregate and then just say, “Oh, it lost” or “Oh, it won,” but it’s possible that, in that same vein, maybe the test won, but if you look at the results, it was actually really, really, really good on desktop and “meh” on mobile. Then you can interpret those results different ways.

I’ve been running a lot of tests across these programs, and the more tests I run, the more I look at those segments. Sometimes you can go down a rabbit hole and you just go super deep into segments, and then you’re like, “All right, I’m looking at desktop users who are female between the ages of 30-35 who have bought in the past” – it’s like, okay, you’ve narrowed it down to about three users with a lift of 300%, but that’s not useful. [laughs]

But yeah, totally, that’s something that’s commonly overlooked in segmentation of results, for sure.

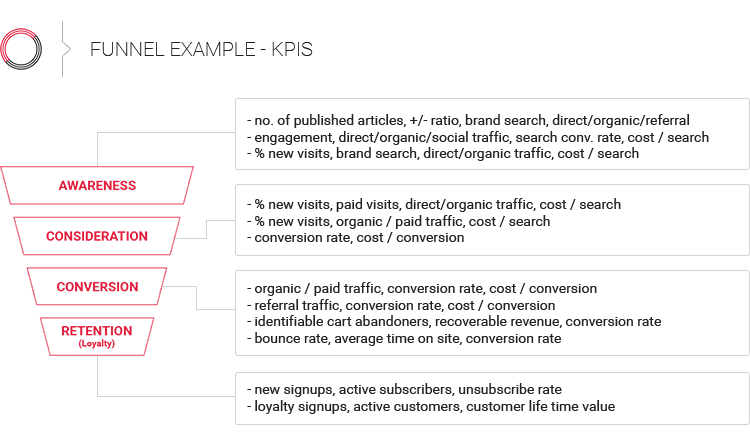

Iztok: One thing that I also see – at least in our industry, in the airline industry, there is a lot of talk about personalization. I try to explain to airlines that personalization and experimentation go hand in hand, because basically with personalization, you need to figure out how different audiences are different to different kinds of messages, different kinds of products, and you can test this out with experimentation. So you learn about your audiences and different kinds of audiences.

I don’t know how you see that, but I see that the good organizations that are smart with this approach are combining CRO and personalization together.

Shiva: Totally. One of the things that’s commonly overlooked, too – my personal opinion on this is that a lot of people just assume that personalization, or at least their execution of the personalization, is the right thing to do, and they just roll it out. But I have a very strong belief that you shouldn’t just personalize for the sake of personalizing.

One, you won’t be able to calculate the ROI. Experimentation is super helpful in not only getting you wins, but calculating what that lift is and how much revenue your program is actually driving. It’s very unique in that there’s certain programs that it’s difficult to attribute revenue specifically to branding campaigns and stuff like that. Sometimes it can be a little bit muddy. But within an A/B test, you literally know “this experiment that we ran gave us X amount of dollars in revenue as a lift.”

Iztok: This is a great point. That’s why we also preach first, even before experimentation, do proper analytics so you can measure how big these different audiences, different segments are, and then, like you said, before you personalize, make sure you can test. Otherwise you don’t know what works and what doesn’t. Just thinking “personalization will work because we will make simple variations without measuring and testing,” I think, like you said, it’s not the proper way. This is another great point why you combine these two.

Shiva: Totally.

Iztok: Going back to one thing we said, if you want to start with experimentation, you need a person who is enthusiastic to do this internal teaching, being an educator for the program, dealing with HiPPOs, explaining the value, showing the proper processes, how to measure – but then once you establish this, when you get this trust, when you’re starting with the first experiments and you get some wins, I think a lot of organizations struggle with scaling this up. What is your experience in how to scale, how to do then more experiments, how to do it on a little bit bigger scale?

Shiva: Let me take a couple steps back. I did want to mention a couple things about starting off with the program and then scaling it up. Just because you run a couple tests, doesn’t mean that you actually have the solid foundation baseline to scale it up.

The way that I thought about and my framework for starting and scaling an experimentation program is I have a couple steps. The first one is literally just assessing the tools that you have, figuring out – you obviously need an experimentation tool, whether that’s Optimizely, VWO, whatever it is, you obviously need the tool. You need the analytics behind that. As a value add, you have heatmapping, click tracking, etc.

So you first assess the tools that you have. Assess the resources that you have, which is designers, developers. Make sure you collaborate very well with them. I know you mentioned CRO-ers have to be passionate and excited for it, but honestly, it’s a lot of relationship-building, too, because there’s a lot of opinions when it comes to CRO, and CRO validates and invalidates a lot of opinions.

So you obviously have to be a little bit more humble, but you have to also be able to have the right communication style to communicate that with the key stakeholders, which are designers and developers, the marketing teams, senior leadership, etc. So just make sure that you establish very good relationships with them, because you want to collaborate with them and you want to establish a very transparent program.

You also want to hear their perspectives on things, too, because when you’re running these tests from a purely data perspective, like you’re just looking at GA, looking at user research, whatever it is, the people who are on the frontlines can give you a much more – I don’t want to say much more, but they can give you a totally different perspective that you hadn’t even considered. Talking to customer service folks, “people are complaining about the website” or “people are complaining about this,” then you can take those problems and possibly internalize them as tests.

Talking with program managers and saying, “What are the problems you’re seeing right now?” “We’re seeing that a lot of people aren’t really engaging with this new thing that we added,” so then working with them and figuring out, “What do you hope to accomplish with this? What are the goals for this?” and talking through these problems with them and hearing their perspectives is extremely valuable.

I think those are a couple things you should definitely establish before you even launch a test. And then obviously, you have to set up a roadmap with a prioritization framework that thinks about projected impact lifts, dev research required, impact to revenue, etc.

But once you have started with the process of collection of ideas, talking with key stakeholders, and establishing those relationships and figuring out the resources you have, honestly, the biggest thing you can do is figure out that lowest hanging fruit opportunity and win as soon as possible. I think you mentioned that, where once you have a win, how do you scale up?

Before you even win, try and find the easiest win you possibly can. If you can get a quick win and you can quickly highlight the fact that CRO is worth doing – which it really is; there’s no arguments to be made with that. But as long as you can prove that and say, “Hey, we’re one for one. We ran this test and we lifted revenue by 5%. It’s not necessarily much, but man, one for one with our first test, that’s pretty good, right?”

Once you prove that out, then you start getting that buy-in from key stakeholders to then scale it up, because once they see that you did a test that won and you have those relationships set up, then you can work with the devs and be like, “Hey, man, we got this win. It’s 5% lift to revenue. Let’s hardcode this, but also, I’d like to work a lot closer with you guys on execution of these tests for some more complex things” – one, because that’s the right way to do it. Two, it’ll just be faster and more efficient.

And working with the design teams, too, making sure that they’re all onboard and they’ll have the unique perspective too, making sure you’re collaborating well with them. But honestly, when I’ve had experience with this, that’s what I’ve always done. As soon as I’ve established a baseline, immediately focus on the highest priority, like the lowest hanging fruit test that we could win quick. Then that helped immediately get the conversation going with the dev teams. Like, “All right, what do your resources look like? How can I work with you guys so that we can start prioritizing the dev resources for more of these tests?”

Start building out tests where you’re not just building a test and then running it, and then you’re building the next one and running it. You get so many levels of scale by enabling that parallelism. So not only are you running one test; you’re building five in the backlog, and then the dev teams are prioritizing those, but the dev teams all have their priorities, and they’re like, “Oh, actually, this is a three-story test that we can quickly knock out. We’ll add that to the next sprint.” Then getting a queue of these tests logged up so that as soon as one test ends, the next test immediately jumps on, and then scaling it from there is super helpful.

Iztok: Okay. We’ll take a short break, and after the break, we’ll continue talking to Shiva about how to scale up the conversion rate optimization process.

We are back with Shiva. Shiva, before the break you mentioned several interesting points. I think the first one that was really interesting to me is that if you’re a CRO program leader, you really need to be good in communication, especially internally. I did an interview with Karl Blanks, who is the author of the great CRO book, Making Websites Win, and he said that exactly, that that is basically one of the most underrated things about a CRO person, that they really need to be good with internal stakeholders, with developers, with UX people, to get this in place.

The second one, at the end, when you were talking about how to prepare to run tests in parallel, how to prepare tests properly to run them so that you can start more tests after the previous ends – one thing that I see with companies that do this on a bigger scale is having a dedicated development pipeline, dedicated development teams and UX teams specifically for CRO. Is this something that you see as well?

Shiva: Yeah, totally. I’ve talked to a number of CRO people where the matrix of the organization where CRO lies is super interesting. I don’t think there’s a right answer with how you matrix CRO into your org. You could have CRO living within the product team or living within the engineering team. Maybe CRO lies within the brand team. Maybe it’s its own function, CRO, and you have direct tier levels of conversion rate optimization, and then they function and talk to everyone.

I don’t think there’s a right way to do it, but I think one of the challenges personally that I’ve had – and I think this is not unique to me, honestly – just getting dedicated developer resources is always a challenge. And that’s not unique to CRO, honestly. That’s a problem that if you had 5 cents for every time you heard “I need the developer to do this” – it’s always a challenge. Dev resources has always been a challenge and limitation, because they have their hands tied to so many things, and they’re always busy doing priority stuff.

But yeah, one of the things that I’ve been working very closely on and scaling my own team is hiring a dedicated developer that would sit on my team and then hiring a dedicated UX designer that would sit on my team. That way, that person would work very closely with any of the other development teams and making sure they’re aligned with sprints and release cycles and stuff like that, but being able to work in a non-sprint environment where as soon as the test is ready, we can literally launch it, and they’re dedicated exclusively to building tests. There’s a specialization that you get with that that helps from a scale perspective. But just from a speed and efficiency standpoint, having that resource line on your team is awesome.

Then one of the things that I stress very much is prioritization of iterations. Let’s say you want to test – it wins with conversion rate 10%, and you want to move on to the next version of that test or see if you can push that concept even further. You look at the data and you realize, “Okay, we tested this this way. Here’s a couple other options as to how we can iterate on the next steps.”

If it goes back to the design team, it might immediately have to go to rescoping and it might be treated as a new test, versus the person who originally built it knows exactly, “I built it this way. Okay, I see what you’re saying,” and they can tweak it. Maybe it’s a sprint with a dev team; maybe it’s an hour with a dev that’s on your team and you can immediately launch a next iteration of that test and continue to capitalize on those wins.

Iztok: Good points. I think this is, like you said, really crucial. I see the organizations that have dedicated development teams for the CRO are the ones that can really do more tests and do them faster.

But one challenge that I see is when organizations are very keen to scale up testing because they see, “We got some early wins,” like you said, some quick wins, “now let’s do as many tests as possible because this will make us grow faster and we will be much better.” The quality of the tests then suffer. Basically, I see some cases when maybe tests look like the whole process of validation, preparation, user research. People confuse testing with user research and they don’t prepare testing properly and they use it as “the test will show us what the user thinks” instead of testing being just a validation.

At least my experience is that much more time and effort should be done before the testing itself, and then execution of the test is just the final part. What is your experience in this, or what’s your opinion on that?

Shiva: As a sidebar to that, I think it’s interesting with certain tests. You’ll see that user research will say, “You need to do X, Y, and Z because that’s what our users want.” So you’ll be like, okay, let’s do it. Then when you give users what they want, they don’t necessarily convert at a higher clip, and they sometimes actually decrease.

So sometimes it’s like a weird mix where a lot of tests I’ve run, giving the user what they want isn’t necessarily the best thing for what the user is, because sometimes the user doesn’t know what they want. It’s an interesting balance of working with the design teams to be like, “Okay, these are things that are worth it, but also, there are things that we know have worked or these are things that we’re trying to test to see if they work or not.”

Iztok: Yeah, it’s like doing sometimes the surveys. People say they will do one thing, but then when it comes to really doing it, really paying for it, it’s not always the same thing. This is one other thing that’s really crucial with testing, because you can really validate if users will do what they say they will.

Shiva: Exactly. Yeah, that’s a very succinct way to put it. That’s exactly right. [laughs]

Iztok: One thing when it comes to user research – because as I said, personally I think if you do the conversion optimization process, the whole cycle and the user research, analytics, what it makes you in the long run is to really understand your users better because you’re doing all these activities, these agile cycles of research and then optimization and testing, and it’s about learning. To me, knowing your users and then changing stuff so it fits your users the best way is the key.

I noticed one interesting thing about you, that you did improv classes in the past. Is that something that helps you with experimentation? I mean like doing role-playing, maybe relate better to get in your user’s shoes?

Shiva: I’m not going to say correlation implies causation.

Iztok: [laughs]

Shiva: But after taking improv classes, I’ve noticed that I have won more often than I’ve lost. Is that a statistically significant result? Maybe. [laughs] But honestly, I took improv classes, one, because I just love improv. I think it’s fun, and everyone needs to go see an improv show because it’s just so much fun. But I mostly took it because it helps me personally talk to senior leadership and think on my feet. You just literally have to jump in and start saying words, and everything will just start coming to you.

So I love doing it because it’s fun for me, but it’s also really helped public speaking. So I highly recommend it from that perspective.

Iztok: When I saw that you were doing it, I started thinking, and I think – at least personally, I suffer from that as well. Even if we are trained to do experimentation, that you know you need to be aware of your biases and opinions, in a way we still want to – it’s hard to not look at things from your perspective. You are always biased. So I think improv or things like that to help you put yourself in some other person, to look at some things from another angle, to understand things differently, I think it’s a really good connection. Not just, like you said, being better at communicating, but also I think understanding and looking at things from different angles.

Shiva: Yeah, totally. One of the things I do a lot with my tests, to the point of poking holes in validating and not introducing biases and your interpretations, is I very much am a skeptic when it comes to testing. Well, I guess not testing. Maybe I’m just a skeptic in general in life. [laughs]

But with every test that I run, I don’t just look at macro level events – let’s say, for example, I’m running a test and I say, “Looks like conversion rate improved by 10%. Great, lock it up, move on.” I usually stress test the hell out of every single metric. So I’ll look at things like, did this increase bounce rate? Did it decrease bounce rate? Did people even engage with this new thing that I added? Did people even see it? If it was below the fold, how many people even saw it?

A lot of times we talk about false positives in statistics, and there’s certain ways you can use statistics to validate or invalidate the false positives and the false negatives, but to also layer on this level of tracking micro conversions – which means things like I just mentioned; bounce rate, how many people saw an element, how many people interacted with an element – those are ways you can stress test your tests.

So you validate that conversion rate increased, but also, we saw an improvement in let’s say form starts or people who added to shopping cart, and we saw an increase in the amount of people who then got to maybe that next step or that final step, and then we saw an increase in conversion rate. Those are ways you can help validate and poke holes in the tests so that you’re not just looking on a macro level and saying “it’s statistically significant, boom, on to the next one,” because oftentimes you might hit some false positives with that.

Iztok: Yeah, I agree. I think it goes along the lines of what we talked about before – not only seeing the tests, win or lose, but also trying to understand as much as possible why, to get the learning out from it.

Shiva: Yeah. Exactly to that point, it’s not just doing that to stress test your test, literally, but it’s also from a test and learn perspective. I am very much an advocate of test to learn, not test to win. If you test to learn, you will win a lot more.

What I mean by that is testing principles and testing things to see, what is behavior on the site? What are people naturally gravitating towards or not gravitating towards? You can promote the things that people gravitate towards that aid in the conversion path, and then maybe deprioritizing or maybe tweaking the things that people aren’t resonating with to see if maybe that becomes a value add for them. A lot of tests I run – actually, I wouldn’t say a lot – 100% of the tests I run, I learn something out of that test. Whether it wins or loses, you learn.

For example, let’s say you run a test where you have product reviews and you add maybe additional product review information on your website or something like that. Let’s just say for example it’s Amazon, and you’re testing different types of review facets, something like that. Let’s say you add in three additional lines of additional review content, and it didn’t resonate with people. But then you look at the heatmaps and you’re like, “It looks like people did read it, but maybe it was a detriment because conversion rate went down and visits to product pages went down.”

So you take those cuts of data and figure out those next steps and those next iterations from that first test you ran. You might lose a little bit in the beginning, but your wins will be far greater than “I have this crazy idea to totally test this brand new landing page,” and then if it loses, you’re like, “I tested 50 different things on here. I don’t know why it lost, so let’s just try the next big thing.” It becomes a challenge.

There’s definitely a balance between the level of disruption and the level of changes that you make, but I’m very much a firm believer of make sure every test that you run, whatever those results are, you’re setting yourself up for success by basically tracking the hell out of everything and making sure that you learn. Whether the result is positive or negative, you learn something from it.

Iztok: I like the expression that you said, not test to win, but test to learn. This is what I think conversion optimization process and any experimentation is all about.

It was a great chat, Shiva. I really enjoyed it. The next time, if I go to Texas, I need to reach out to see your improv show, yeah? You’ll have to tell me where to find you and see you live.

Shiva: Oh, absolutely. And I am going to definitely make sure I get you a taco, because they’re the best in Austin.

Iztok: Okay. Thank you again, and wish you all the best with your future experimentation. I know you’ll have fun. It was great talking to you.

I am passionate about digital marketing and ecommerce, with more than 10 years of experience as a CMO and CIO in travel and multinational companies. I work as a strategic digital marketing and ecommerce consultant for global online travel brands. Constant learning is my main motivation, and this is why I launched Diggintravel.com, a content platform for travel digital marketers to obtain and share knowledge. If you want to learn or work with me check our Academy (learning with me) and Services (working with me) pages in the main menu of our website.

Download PDF with insights from 55 airline surveyed airlines.

Thanks! You will receive email with the PDF link shortly. If you are a Gmail user please check Promotions tab if email is not delivered to your Primary.

Seems like something went wrong. Please, try again or contact us.

No Comments